Gemini 435LE: More Usable Depth for Real-World Robotics

Long-baseline stereo with AI-enhanced on-camera refinement for more complete depth, cleaner contours, and less host-side cleanup

In robotics, seeing farther matters. But range alone is not enough.

A robot does not make decisions from a specification sheet. It makes decisions from the depth data it receives frame by frame, in motion, under changing light, around real objects, and in environments that are rarely as clean as a lab demo. In that context, the useful question is not simply how far a depth camera can see. The more important question is how much of that depth a robot can actually use.

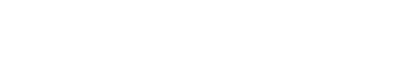

That distinction sounds subtle, but it is not. Depth that looks acceptable in a static screenshot can still be difficult for a robot to use if it contains fragmented surfaces, unstable regions, or weak object boundaries. For navigation, that can mean uncertainty in free space. For pallet-facing or measurement tasks, it can mean lost contour detail. For outdoor or semi-outdoor robots, it can mean a perception pipeline that appears fine indoors and begins to struggle when illumination and scene contrast stop cooperating.

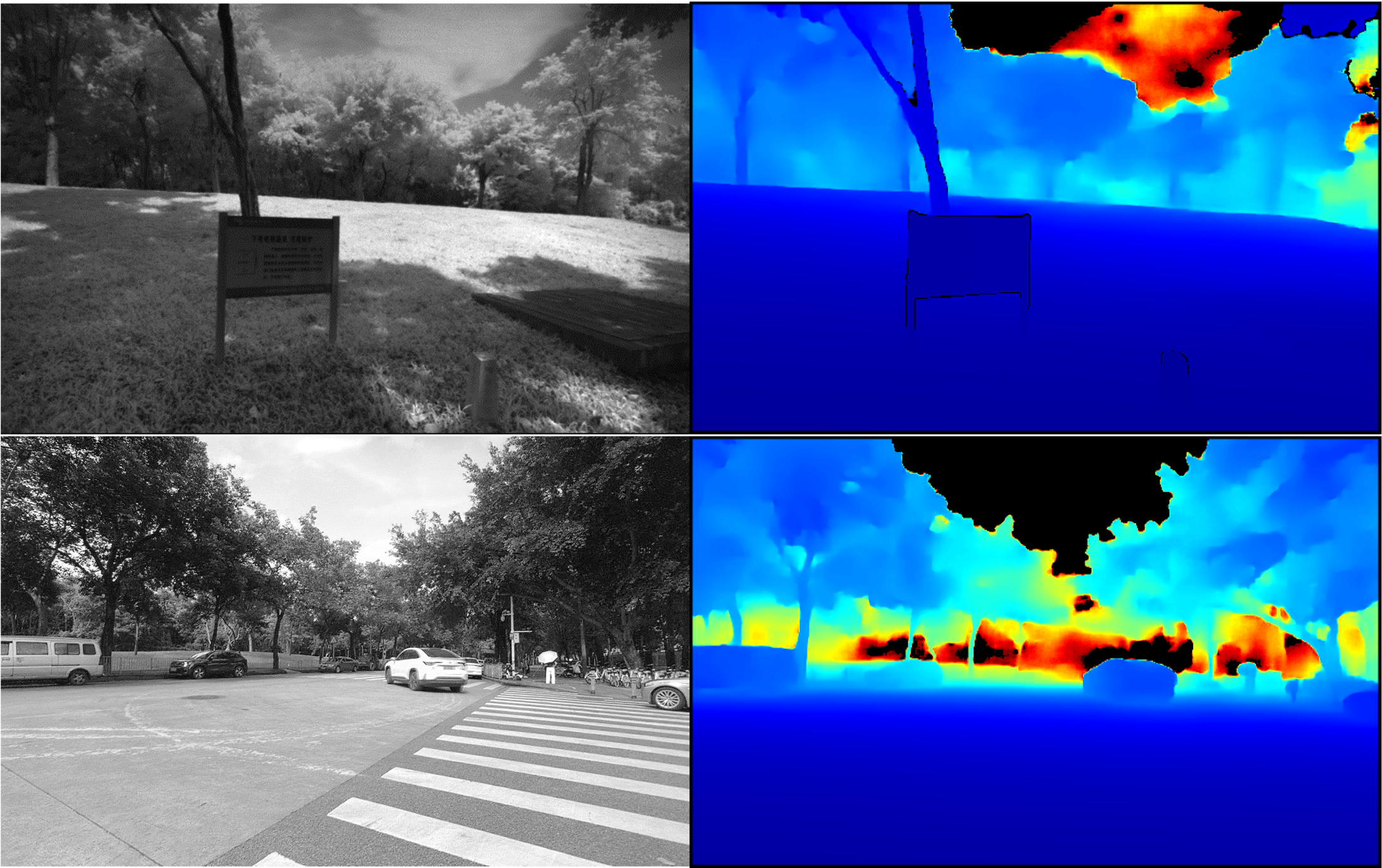

This is not a new problem, and it is not unique to one sensing stack. The research literature has been direct about it for years. Work on depth completion and depth rectification repeatedly points to the same set of challenges: missing or invalid depth values, distortions near object boundaries, and the difficulty of preserving structure where geometric transitions matter most. That is the background behind Gemini 435LE.

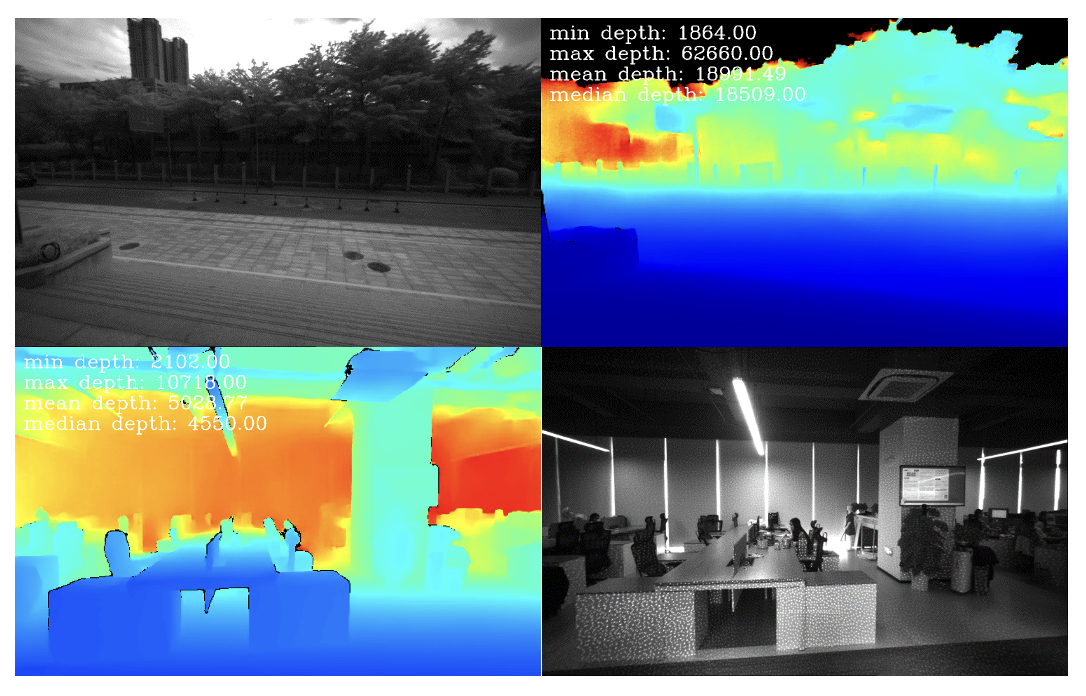

Usable Depth vs. Headline Depth

For years, depth-camera conversations have often been dominated by a handful of headline specifications: range, frame rate, field of view, and accuracy at a reference distance. Those still matter. But real robotic performance depends just as much on what happens between those lines.

A mobile robot does not need only a distant obstacle indication. It needs a reliable local world model. In ROS 2 Nav2, depth and camera data can feed costmap layers used for obstacle marking and collision avoidance, and the documentation explicitly warns that noisy sensor measurements can create false obstacles that keep the robot from finding the best path. That is not a cosmetic issue. It is a navigation issue.

The same principle shows up in logistics and material handling. Academic work on pallet localization for forklifts describes pallet detection as a common task in intra-logistics, with positioning information used by AGVs and human-operated forklifts to support pallet pick-up. That work also emphasizes that detection must happen while the vehicle is moving and within a real-time processing budget. In other words, the depth result is not there for visualization. It exists to support action.

Another pallet-recognition study using an RGB-D sensor reports that accuracy is tied to detection distance and angle, and specifically notes that as the detection distance approaches the edge of the depth sensor’s effective range, sensor mistakes begin to affect detection accuracy. That is a useful reminder that seeing something at range and preserving enough usable structure for downstream decisions are not the same thing.

So when robotics teams evaluate depth, the question should shift. Not simply, ‘How far does it see?’ but, ‘How much trustworthy geometry does it preserve in the parts of the scene where decisions are actually made?’

Why Real Robots Expose the Weak Points of Stereo Depth

Stereo remains one of the most attractive depth approaches in robotics because it is scalable, passive, flexible, and a natural fit for many embedded systems. But real-world scenes expose the places where raw stereo output becomes harder to trust.

One well-known issue is missing or invalid depth. Official industry documentation for stereo systems openly describes holes as a normal part of stereo depth. These holes can come from occlusions, lack of texture, multiple ambiguous matches, exposure problems, or operating conditions that exceed the search range. Other vendor documentation notes that pixels at object borders are often explicitly invalidated during left-right consistency checks because occlusions make the two views disagree at those locations. In plain terms, the places robots often care about most, such as boundaries, openings, and transitions, are also the places where stereo commonly becomes more fragile.

This matters because boundaries are not a decorative feature of a depth map. They are where scene geometry changes meaning. Recent work on depth rectification makes the problem explicit: raw depth often contains erroneous pixels, blur, and noise around object boundaries, and correcting those boundaries remains difficult precisely because flat regions are easier to recover than discontinuous ones. That observation lines up closely with engineering experience. It is usually not the center of a large flat box that causes trouble. It is the edge of the box, the pallet opening, the rack boundary, the fork pocket, or the free-space transition next to it.

The industry’s usual answer is post-processing, which is helpful but revealing. Across stereo platforms, it is common to see configurable chains of spatial filtering, temporal filtering, median or speckle suppression, confidence-based invalidation, brightness filtering, and hole filling. Those tools exist for a reason: raw depth often needs help. But they also come with tradeoffs. Spatial filling often relies on neighboring valid pixels. Temporal filtering can improve persistence, but vendor documentation also notes that it may introduce motion blur or smearing and is best suited to static scenes. Hole filling itself is often a best-effort estimate rather than a guarantee of true geometry.

That last point is especially important for robotics. One official stereo post-processing guide explicitly notes that for some applications, including robotic navigation and obstacle avoidance, it may be better to leave holes than to fill them with a weak guess. That does not mean refinement is a bad idea. It means that cheap filling is not the same thing as usable depth. The real objective is not to cosmetically erase zeros. The objective is to improve scene usability while preserving meaningful structure.

This is exactly why depth refinement has remained an active research topic. The field has moved through classic inpainting, filter-based completion, RGB-guided recovery, structure-preserving networks, and increasingly efficient learning-based methods. The terminology differs, but the agenda is consistent: recover missing depth, protect edges and small structures, and do it efficiently enough for real systems.

Gemini 435LE: AI-Enhanced Refinement Where It Actually Matters

Gemini 435LE is built around that system-level view of depth quality.

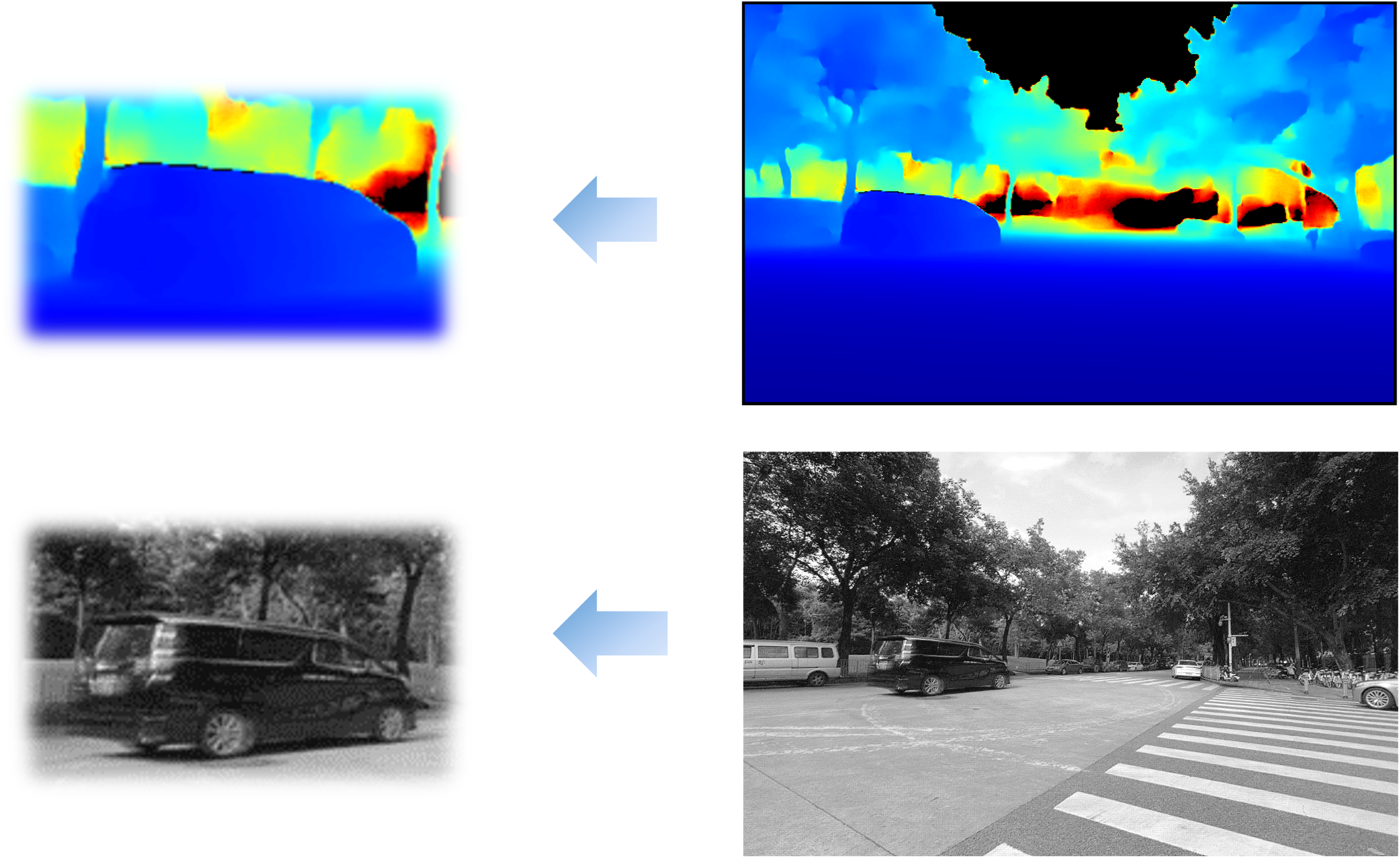

At a high level, the camera combines long-baseline stereo with dedicated onboard processing for both depth generation and AI-enhanced refinement. That architecture matters because it changes where depth quality is improved. Instead of treating the camera as a raw depth source and asking the host to repair the result later, Gemini 435LE is designed to refine the output at the camera stage.

That distinction sounds architectural, but it shows up in practical ways. First, it aims to improve depth completeness, so the scene arrives with fewer problematic regions that downstream modules must interpret or work around. Second, it aims to improve edge and contour fidelity, so boundaries remain more useful for tasks that depend on object shape and separation. Third, it reduces how much depth-specific cleanup the host needs to carry, which matters in robots where the main processor is already busy doing more valuable work.

Efficiency has become a central theme in the literature as well. Lightweight depth-completion papers explicitly frame real-world depth perception as a computational-cost problem and emphasize the need for real-time performance. Recent edge-device work on depth rectification makes the same point from another angle, arguing that inaccurate depth can hinder 3D reconstruction and visual SLAM while many stronger correction methods remain too heavy for constrained edge platforms. The message is consistent: better depth is useful, but better depth that also respects compute limits is much more useful.

That is why the phrase AI-enhanced matters here, but only if it is understood correctly. The point is not to paste AI onto a camera and hope the brochure becomes modern. The point is to improve the depth signal at the part of the pipeline where it can do the most good, while avoiding unnecessary host-side burden. Put differently, the value is not that the refinement is ‘AI’ in some abstract branding sense. The value is that the refinement is on camera, applied to a problem that robotics teams already feel every day: incomplete depth, weak contours, fragile scene geometry, and too much downstream cleanup.

What This Means in Real Robotic Scenes

1. Warehouse Navigation and AMR-Style Mobility

A warehouse robot is not only asking whether an object exists in front of it. It is constantly updating a working model of where free space is, where obstacles begin, which surfaces are reliable, and how a route should be adjusted if the local world model changes. In systems built on depth-aware navigation stacks, noisy data can become false costmap obstacles. In deployment, that means degraded local behavior long before anyone blames the camera.

This is where more complete and more stable depth becomes useful in a very practical way. It is not just about reducing the number of holes in a visualization. It is about helping the robot interpret aisle boundaries, pallet fronts, rack edges, and transitional free space with fewer fragmented regions and fewer unstable artifacts. If the robot moves through a mixed-material scene and the depth remains coherent where a maneuver depends on it, that is already a system win.

2. Pallet-Facing, Forklift, and Material-Handling Tasks

Forklift and pallet-localization research is a good reminder that industrial depth use is often unforgiving. Studies on pallet localization with 3D camera technology describe the task as real-time perception performed while the vehicle is moving, with the result fed to higher-level assistance systems. Related RGB-D pallet-recognition work reports that accuracy degrades with distance and angle and highlights that performance suffers near the edge of the sensor’s effective range. These are not edge cases. They are central to the application itself.

For scenes like pallet pick-up, dimensioning, or structured logistics handling, edge quality is often more meaningful than a dense-looking surface in the middle of an object. A depth result that preserves the geometry of pallet openings, corners, and object contours more clearly gives downstream software more to work with and less to guess. That does not make the rest of the perception stack disappear, but it reduces the amount of damage control that stack has to do.

3. Semi-Outdoor and Outdoor Robotics

Stereo has always become more demanding outdoors. Stereo-only outdoor localization work points directly to large illumination changes as a continuing challenge, and high-dynamic-range stereo research for outdoor mobile robotics shows how variable lighting and high scene contrast can cause stereo matching to fail in incorrectly exposed regions of the input images.

This matters for loading zones, warehouse entries, exterior inspection paths, semi-outdoor material handling, and any robot that moves between bright and dark regions. In these scenes, the goal is not just some depth at long range. The goal is depth that remains coherent enough to guide real actions while scene contrast and ambient illumination change around it. Better completeness and better boundary behavior expand where stereo remains useful, which is often more important than a single peak specification on a clean test chart.

Inspection Robots

Stable long-range depth for outdoor navigation across changing illumination and uneven terrain.

Commercial Cleaning Robots

Reliable near-field obstacle perception under reflective floors and changing indoor lighting.

Forklifts

Clearer pallet boundaries and more usable depth for localization while the vehicle is in motion.

Robotic Arms

Stronger contour continuity around target objects for picking, positioning, and manipulation tasks.

Why On-Camera Refinement Also Helps System Design

There is another benefit here that robotics teams usually appreciate quickly: cleaner compute allocation.

A robot host is rarely underworked. It may already be responsible for sensor fusion, localization, SLAM, path planning, behavior logic, manipulation, logging, fleet interfaces, and application AI. Adding more host-side depth cleanup often looks manageable in a prototype and then becomes expensive when the system scales, when more cameras are added, or when latency budgets tighten.

This is why efficient depth processing has become a recurring concern in both academia and edge computing research. Lightweight depth-completion work explicitly describes computational cost as a central barrier to real-world deployment, and edge-device rectification research argues that many stronger correction methods are still unsuitable where resources are constrained. These are different communities, but they are reacting to the same fact: compute spent repairing depth is compute not spent on autonomy.

By performing depth generation and AI-enhanced refinement on camera, Gemini 435LE is designed to shift that balance. The host can keep more of its budget for the tasks that differentiate the robot, rather than using the same budget to clean up a perception signal that should have arrived in a more usable state in the first place.

That architectural choice does not remove all downstream work. Real systems still need sensor fusion, application logic, and task-specific validation. But it can reduce the amount of depth-specific post-processing and tuning the system needs, simplify integration decisions, and make it easier to preserve headroom for the rest of the autonomy stack.

How Robotics Teams Should Evaluate Depth Beyond the Spec Sheet

If the goal is more usable depth, then the evaluation criteria should reflect that.

A better evaluation set looks something like this:

Those criteria line up better with how robotics frameworks actually consume depth and with how the research community has framed the missing-depth, boundary-recovery, and efficiency problems. They also align much more closely with what engineering teams eventually debug in the field.

That is the lens through which Gemini 435LE should be understood.

It is not only a long-range stereo camera. It is a depth camera designed to produce a more usable result directly at the source: more complete structure, cleaner contour definition, and less dependence on host-side cleanup.

For robotics teams, that changes the conversation.

The question is no longer only how far a depth camera can see.

The better question is how much of that depth your robot can actually trust.

And in real robotics, trust is usually worth more than another line on a specification table.

Frequently Asked Questions

1. What is ‘usable depth’ and why does it matter more than headline specifications?

Usable depth refers to how much of the depth data a robot can actually trust and act upon, rather than just how far a camera can see. Depth that looks acceptable in a static screenshot can still be difficult for a robot to use if it contains fragmented surfaces, unstable regions, or weak object boundaries. For navigation, this can mean uncertainty in free space. For pallet-facing or measurement tasks, it can mean lost contour detail. The real question is not simply ‘How far does it see?’ but ‘How much trustworthy geometry does it preserve in the parts of the scene where decisions are actually made?’

2. What are the common weak points of stereo depth in real-world robotics applications?

Real-world scenes expose several places where raw stereo output becomes harder to trust: missing or invalid depth from occlusions, lack of texture, or exposure problems; boundary fragmentation where pixels at object borders are invalidated during left-right consistency checks; and weak contours where depth contains blur and noise around object edges. These issues matter because boundaries are where scene geometry changes meaning—the edge of a box, pallet opening, rack boundary, or free-space transition. Research confirms that correcting these boundaries remains difficult because flat regions are easier to recover than discontinuous ones.

3. How does Gemini 435LE’s on-camera AI-enhanced refinement improve depth quality?

Gemini 435LE combines long-baseline stereo with dedicated onboard processing for both depth generation and AI-enhanced refinement. This architecture improves depth at the camera stage rather than treating the camera as a raw depth source that the host must repair later. The refinement improves depth completeness (fewer problematic regions for downstream modules), edge and contour fidelity (better boundaries for positioning and obstacle handling), and reduces host-side cleanup burden. The value is that refinement happens on-camera, applied to problems robotics teams face daily: incomplete depth, weak contours, and fragile scene geometry.

4. How should robotics teams evaluate depth cameras beyond the spec sheet?

A better evaluation framework includes: Depth completeness (how much valid depth is available in the task-relevant region of interest), Boundary quality (whether object edges and contours are preserved clearly enough for positioning, obstacle handling, or measurement), Temporal stability (whether output remains consistent over time when the robot or scene is moving), and System burden (how much host-side compute is still required to make the depth useful). These criteria align with how robotics frameworks actually consume depth and what engineering teams eventually debug in the field.

Appendix: Selected Supporting References

- ROS 2 Nav2 Documentation – Concepts. Explains how depth and camera data feed costmap layers used for obstacle marking and collision avoidance. https://docs.nav2.org/concepts/index.html

- ROS 2 Nav2 Documentation – Filtering of Noise-Induced Obstacles. Notes that noisy sensor measurements can create false obstacles and degrade navigation behavior. https://docs.nav2.org/tutorials/docs/filtering_of_noise-induced_obstacles.html

- DepthComp: Real-time Depth Image Completion Based on Prior Semantic Scene Segmentation. BMVC 2017 paper discussing incomplete depth and the challenge of obtaining hole-free scene depth from conventional devices. https://www.bmva-archive.org.uk/bmvc/2017/papers/paper058/paper058.pdf

- Dealing with Missing Depth: Recent Advances in Depth Image Completion and Estimation. Survey discussing missing or incomplete depth as a long-standing problem in depth perception systems. https://breckon.org/toby/publications/papers/abarghouei19missing-depth.pdf

- Depth Image Rectification Based on an Effective RGB-Depth Boundary Inconsistency Model. 2024 paper focused on correcting depth distortions around object boundaries. https://www.mdpi.com/2079-9292/13/16/3330

- Lightweight Depth Completion Network with Local Similarity-Preserving Knowledge Distillation. Paper framing depth completion as both a quality problem and a real-time computational problem. https://www.mdpi.com/1424-8220/22/19/7388

- VoxDepth: Rectification of Depth Images on Edge Devices. Recent edge-device work arguing that many stronger depth-correction methods remain too heavy for constrained platforms. https://arxiv.org/abs/2407.15067

- Intel RealSense – Depth Post-Processing for D400 Series. Official documentation explaining common stereo holes, filters, and why some robotic applications may prefer holes to weak guesses. https://dev.realsenseai.com/docs/depth-post-processing

- Luxonis DepthAI – StereoDepth Node Documentation. Official documentation covering stereo post-processing including spatial, temporal, and hole-filling filters. https://docs.luxonis.com/software-v3/depthai/depthai-components/nodes/stereo_depth

- Outdoor Localization Using Stereo Vision Under Various Illumination Conditions. Paper discussing how outdoor illumination changes challenge stereo-based localization. https://www.furo.org/irie/outdoor_stereo_localization_ar2012.pdf

- High Dynamic Range Stereo Vision for Outdoor Mobile Robotics. ICRA paper showing how variable lighting and high contrast can cause stereo matching failures outdoors. https://www.researchgate.net/publication/221069476

- Real-time Pallet Localization with 3D Camera Technology for Forklifts in Logistic Environments. Forklift paper describing pallet localization as a real-time task performed while driving. https://mediatum.ub.tum.de/doc/1453019/531992.pdf

- Recognition and Location Algorithm for Pallets in Warehouses Using RGB-D Sensor. Warehouse pallet study noting that accuracy degrades as detection moves toward the edge of the sensor’s effective range. https://www.researchgate.net/publication/364441882

Experience More Usable Depth With Gemini 435LE

Discover how AI-enhanced on-camera refinement delivers cleaner contours, better completeness, and less host-side cleanup for your robotics application.