The Future of Robotics: Trends in Stereo Vision Technology

When robots cannot accurately interpret distance, movement, or spatial relationships, their performance suffers. For developers working in industrial automation, logistics, health care robotics, and autonomous systems, perception reliability is often one of the most complex technical challenges. This is where stereo vision technology comes into play.

Stereo vision has existed in robotics for decades, dating back to early research platforms such as Shakey the Robot developed at Stanford Research Institute. Explore the future of robotics and trends in stereo vision technology for modern robotic systems.

Five Key Trends in Stereo Vision Technology

Five major trends are shaping the evolution of robotics vision trends. Together, these developments are redefining robotic visual intelligence.

1. Neural Stereo Vision and AI-Enhanced Matching Algorithms

Traditional stereo vision relied on algorithmic approaches such as semi-global matching to solve the correspondence problem, which involves identifying matching pixels between left and right images. While effective in controlled environments, these methods struggled in low-texture scenes, reflective surfaces, or inconsistent lighting.

The introduction of neural stereo means that machine learning models now analyze patterns across entire scenes rather than individual pixels. This enables more reliable depth estimation in complex environments.

The following illustrates how AI is improving stereo perception:

- Deep stereo-matching networks: Neural models learn visual patterns from large datasets, enabling improved depth estimation.

- Robotics visual intelligence integration: AI-driven perception systems can adapt to environmental changes, improving long-term reliability in autonomous mobile robots (AMRs).

- Self-optimizing perception pipelines: Some stereo systems can automatically tune parameters based on environmental conditions, reducing the need for manual calibration.

2. Embedded Stereo Cameras and Edge-Based Depth Processing

Processing depth data on a robot’s main computer can introduce latency and increase computational load. This latency arises because depth data processing tends to require substantial computational resources, which can overwhelm the main computer’s resources and hinder performance. As robotics systems become more complex, distributing processing responsibilities across hardware components becomes increasingly important.

This is driving the growth of embedded stereo cameras that perform edge-based depth processing directly within the camera hardware. For example, application-specific integrated circuits can enable depth algorithms to run locally, reducing the amount of data that must be transmitted to the robot’s main processor.

The following benefits are emerging from this shift:

- Lower latency perception: On-device processing enables faster reaction times for robotic navigation and manipulation tasks.

- Reduced system overhead: Offloading depth computation frees the robot’s central processor for simultaneous localization and mapping (SLAM), planning, and control.

- Improved system reliability: Dedicated hardware pipelines provide consistent performance across deployments.

3. Multimodal Perception Systems

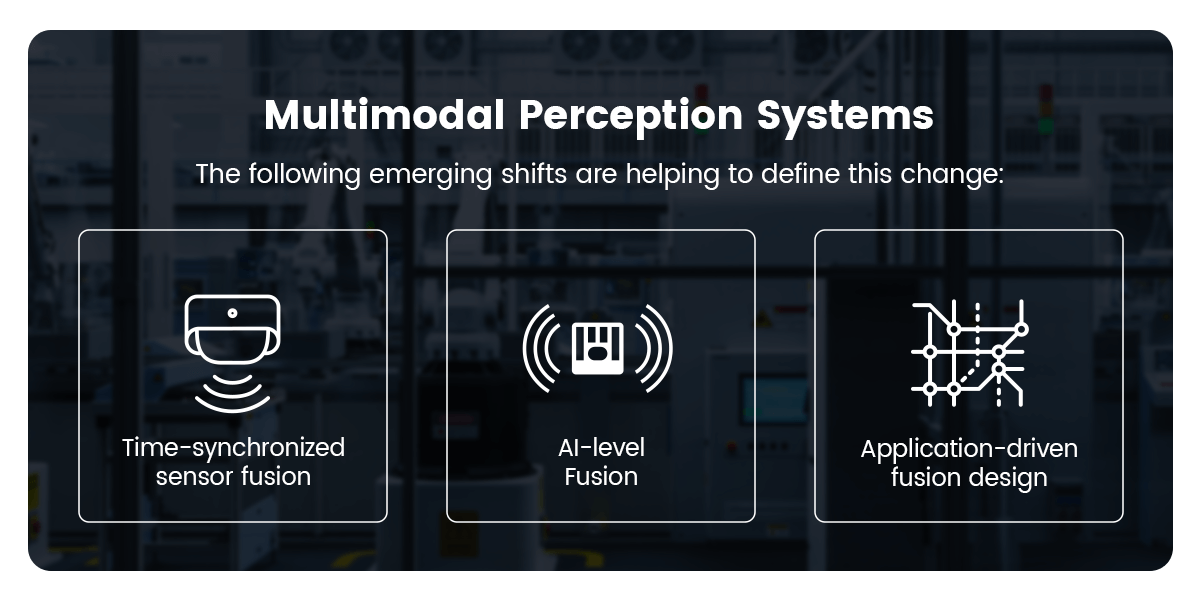

Modern robotics systems have long combined stereo cameras, inertial measurement units (IMUs), and light detection and ranging (LiDAR). What is changing now is how tightly these systems are integrated and how intelligently the data is processed.

In the next phase of robotics perception, stereo cameras are becoming central nodes in perception architectures that prioritize real-time fusion, synchronized timing, and AI-driven interpretation.

The following emerging shifts are helping to define this change:

- Time-synchronized sensor fusion: Modern robotic platforms increasingly rely on hardware-level synchronization among stereo cameras, IMUs, and LiDAR to improve localization stability.

- AI-level fusion instead of post-processing fusion: Rather than combining sensor outputs after independent processing, newer architectures integrate multimodal data directly within frameworks, enabling stronger object detection and environmental understanding.

- Application-driven fusion design: Advanced robotic platforms, including legged robots and autonomous mobile systems, integrate RGB-D cameras with LiDAR to balance near-field manipulation and long-range mapping capabilities.

4. Miniaturization in Stereo Vision Technology

As robots become smaller, more mobile, and more specialized, their perception hardwares must evolve accordingly. The miniaturization of stereo vision technology is enabling new robotic form factors and applications.

Smaller stereo systems are particularly valuable in depth perception robotics applications, especially for precision manipulation tasks. This means lightweight sensors can now be mounted as close to the end-effector as possible, providing close-range spatial awareness.

A few technical shifts are driving this evolution:

- Shortened stereo baselines with algorithmic compensation: Reducing the physical distance between sensors decreases overall device width, while advanced depth algorithms compensate for narrower baselines to maintain depth reliability at operational ranges.

- Integrated ASIC and PCB consolidation: Embedding dedicated depth-processing application-specific integrated circuits (ASICs) directly onto compact boards reduces external hardware dependencies and shrinks overall system footprint.

- Optimized optical module design: Improvements in lens alignment, sensor packaging, and mechanical enclosure design allow stereo systems to maintain structural stability within smaller housings.

5. Environmental Robustness in Robotics Vision Trends

As robotics systems move beyond controlled indoor environments, stereo perception must operate reliably across a wide range of environmental conditions. Illumination is only one variable. Temperature fluctuation, electrical stability, mechanical vibration, interface reliability, and long-term durability all influence perception performance.

The future of stereo vision technology is focused on expanding operational boundaries to ensure perception systems remain stable in real-world deployment conditions.

The following technical advancements are contributing to this shift:

- Wide temperature operating ranges: Modern stereo systems are engineered to function across extended temperature bands, supporting robotics deployed in cold storage facilities, outdoor environments, and high-heat industrial settings.

- Power supply tolerance and efficiency optimization: Improved power regulation and energy-efficient ASIC integration allow stereo cameras to maintain stable operation despite voltage variation or constrained power budgets.

- Stable high-speed interface architectures: Strong data interfaces like mobile industry processor interface (MIPI) or gigabit multimedia serial link (GMSL) ensure reliable communication between stereo cameras and compute platforms in high-vibration or electrically noisy systems.

The Converging Future of Robotics Visual Intelligence

These five trends are reshaping robotic perception capabilities. Neural perception algorithms provide smarter depth interpretation, embedded hardware enables faster processing, sensor fusion improves reliability, miniaturization expands deployment possibilities, and environmental adaptability enables operation in real-world conditions.

Together, these advances are accelerating the development of robots capable of operating in unstructured environments such as homes, warehouses, hospitals, and public spaces.

Industries perfectly positioned for this transformation include:

Industrial automationRobots performing adaptive assembly and inspection tasks.

Warehouse roboticsAMRs navigating dynamic environments.

Health care roboticsAssistive robots supporting clinical workflows.

Retail automationInventory and service robots operating in human environments.

AR/VR/MR systemsSpatial computing platforms using stereo perception.

Frequently Asked Questions

Get your pressing questions on the future of robotics answered.

1. Why Is Stereo Vision Important for Modern Robotics Systems?

Stereo vision provides depth perception without relying on continuous signal emission, making it a scalable perception method for many robotic applications. Advances in AI algorithms, embedded processing, and sensor fusion are making stereo systems more reliable in real-world environments.

2. What Features Define the Best Stereo Vision Camera for Robotics?

Developers typically look for embedded processing capabilities, environmental adaptability, compact form factors, and support for multimodal perception systems. These features help stereo perception systems perform reliably across industrial automation, warehouse robotics, and research applications.

3. What Is Edge-Based Depth Processing in Stereo Vision Technology?

Edge-based depth processing refers to computing depth data directly within the stereo camera using embedded processors or ASICs. This reduces latency and frees the robot’s main processor to focus on navigation, planning, and control tasks.

Democratizing Advanced Perception With Orbbec

When evaluating the best stereo vision camera for robotics, developers should prioritize embedded processing, neural perception capabilities, support for multimodal integration, compact form factors, and environmental adaptability. This is because these capabilities are becoming foundational requirements for modern robotic perception systems.

Orbbec delivers 3D vision that reflects the broader industry movement toward democratizing advanced perception technology. By investing across sensors, silicon, AI algorithms, manufacturing, and life cycle support, our products enable developers to build scalable solutions.

Consult with our 3D vision experts to explore how Orbbec’s stereo vision technology can enhance your robotic applications.

Build the Future of Robotics With Orbbec

Whether you’re developing autonomous mobile robots, industrial automation systems, or healthcare robotics, our experts can help you select the ideal stereo vision solution for your application.