Robotics technology has come a long way over the last few decades, and 3D vision systems are at the heart of it all. 3D vision systems enhance the sensory capabilities of robots and enable robots to “see” in three dimensions, perceive distance, detect and track multiple objects at once. These capabilities help robots to interpret their complex surroundings. We can see them in the form of self-driving cars and delivery drones — robots are changing the way we live and interact with the world.

Explore how 3D vision systems work, how they are championing a new era of robotic capabilities, and how to implement these technologies and fully leverage the power of modern robotics.

The Evolution of Perception in Robotics

From simple sensor-based systems to sophisticated 3D vision technologies, robotics has evolved in many ways over the years.

Types of Warehouse Automation

Each of these solutions plays a critical role in reducing manual labor, enhancing accuracy, and increasing overall warehouse efficiency. By integrating these technologies, you can streamline operations and keep up with the demands of the ever-evolving logistics industry.

Traditional Robots

In the early days, robotics technology mainly used basic sensors like limit switches and 2D cameras. Robots could not perceive depth or recognize objects. They were also more sensitive to changes in light but unable to work in non-deterministic environments.

A Shift to Sophistication

As robots began to be used for more demanding tasks, there was a need for more sophisticated sensing and processing techniques. Other than performing specific and repetitive tasks in controlled spaces, robots needed to be able to understand their environment and make decisions, such as working in automated warehouses.

With the development of machine learning and 3D machine vision for robotics, more and more robots can now perceive their surroundings and make decisions in real time.

Modern Trends

Here are some modern trends in robotic technology:

- Sophisticated sensors: Modern robots get their data from various sensors, including visual, auditory, and tactile sensors. With 3D vision feeding them depth perception, robots can “see” their surroundings better. They can recognize and pick out objects with greater accuracy and speed, respond correctly to changes in their environment, and complete a wide range of unique tasks.

- Deep learning algorithms: Certain algorithms have made it possible for robots to actually interact and then learn from their experiences. With these abilities, they can make decisions and become better problem solvers over time.

- Human-robot collaboration: With better perception and decision-making abilities, robots can now interact better with humans and work alongside them. For a simple example, they might help pick items from shelves in warehouses or even load and unload products from trucks.

How 3D Cameras Enable Advanced Perception

With 3D vision cameras, robots can determine the shapes, sizes, and positions of objects around them. When equipped with greater spatial awareness, they can more easily get around unstructured spaces and avoid obstacles.

For autonomous mobile robots (AMRs), for example, 3D robot vision can make navigation safer and more efficient. By providing detailed depth maps, the robot can move around faster and plan out the best paths. This technology also allows robots to adapt to dynamic and unstructured environments, letting them map out spaces.

Three-dimensional vision also helps with robotic arms in tasks like assembly, quality control, and bin picking. A robotic arm with 3D cameras can find and pick out specific objects, deciphering them even among thousands of different products.

Core 3D Vision Technologies

Stereo vision and time-of-flight are the core mechanisms that give robots distance (depth) perception and allow them to track multiple objects at once. Here’s how these core technologies work.

Stereo Vision

Stereo vision uses two cameras to capture two images of the same scene, just from slightly different viewpoints. It then calculates the disparity between matching points in the two images, estimating the depths of objects in that same scene. The system uses ambient lighting, working as a passive sensor to capture depth.

The accuracy of stereo vision cameras depends on a few factors, namely the baseline, resolution, and calibration quality:

- Baseline: The baseline is the distance between the two cameras. A wider baseline can provide a more accurate depth measurement, though it also increases the risk of occlusions, which is when one camera cannot see an object that’s visible to the other.

- Resolution: Resolution is determined by the number of pixels in each image. The higher the resolution, the finer the details and the better the depth accuracy.

- Calibration quality: Camera calibration involves determining certain parameters of the cameras — including focal length, lens distortion, and the camera’s position within the scene — to get an accurate depth measurement.

Stereo vision is often used in applications where accuracy is essential, such as:

- Robotic arm cameras: Finding and picking parts with robotic arms to assemble or relocate.

- 3D scanning: Creating detailed 3D models of objects or environments.

- Autonomous navigation: Providing accurate depth information to avoid obstacles and plan paths.

Orbbec has extensive experience in this type of technology, developing stereo vision cameras and algorithms that calculate distance accurately and precisely. This technology can provide exceptional accuracy for any robotic task, even in challenging environments. Orbbec’s stereo cameras can be easily integrated with a wide range of robotic platforms, enabling developers to incorporate them seamlessly into their system design and helping developers accelerate prototyping and deployment.

Time-of-Flight (ToF)

ToF technology measures the time it takes for light to travel from a camera to an object and return. By analyzing the time-of-flight of the light signal, ToF cameras can figure out the distance between the camera and objects in one scene. This technology is fast and able to process data in real time, so it’s best in dynamic environments.

Unlike stereo vision, ToF uses active illumination — typically in the infrared spectrum — to measure the distance to objects. The camera emits a modulated light signal and then measures the phase shift of the reflected signal to determine the time of flight. It allows ToF cameras to capture depth information quickly, even in low-light conditions.

ToF technology can be ideal in applications calling for better speed:

- Gesture recognition: Tracking hand movements for human-robot interaction.

- People counting: Counting the number of people in a room or area.

- Autonomous navigation: Determining depth information quickly to avoid obstacles and plan routes.

Orbbec’s ToF camera solutions offer high frame rates, wide field of view, and exceptional performance in various lighting conditions. These ToF cameras also easily integrate with robotic systems, making them a powerful tool for innovative uses.

Integration of Stereo Vision and ToF

Integrating both stereo vision and ToF into robots enhances their performance and versatility. The robots benefit from the high accuracy of stereo vision and the high speed of ToF, getting more reliable depth sensing that can be used in various environments. For example, a system could use stereo vision for high-accuracy depth measurements in static areas of a scene, while using ToF for depth updates in more dynamic areas.

Orbbec stands at the forefront of these 3D vision system fields, integrating the strengths of both technologies to deliver high-performance solutions even in the most demanding applications.

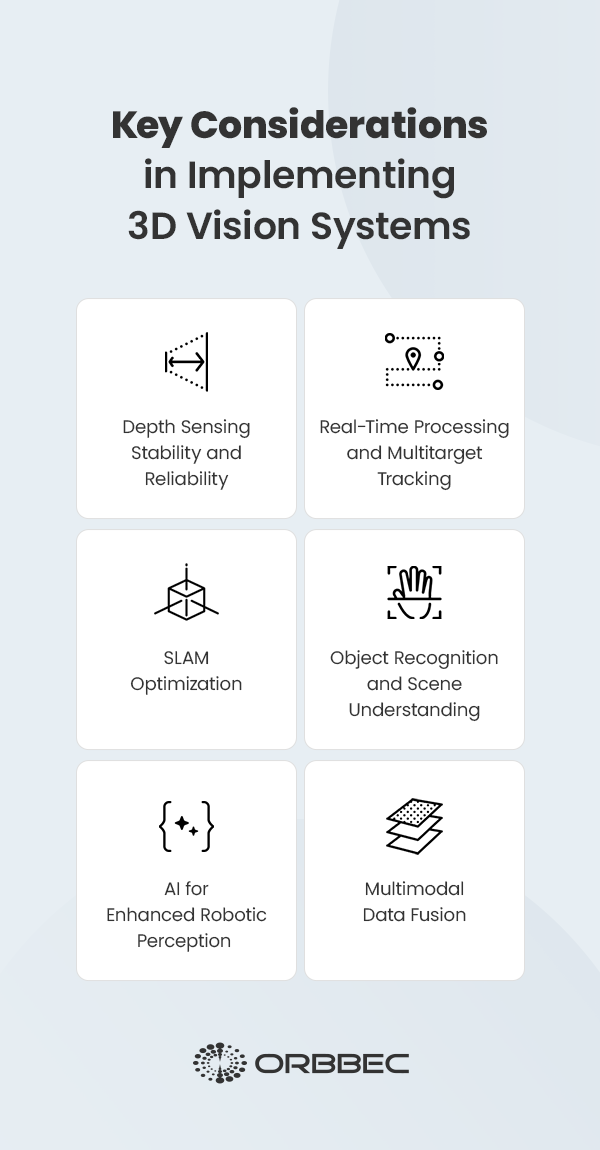

Implementing 3D Vision Systems: Key Considerations

Deploying 3D vision systems for robots involves more than just choosing the right camera. To successfully implement and realize the full potential of robots, engineers and developers will need to consider the following challenges.

1. Depth Sensing Stability and Reliability

Certain environmental factors can get in the way of obtaining an accurate depth measurement when working with 3D robotic vision. For example, too much or too little illumination can distort the depth data. High reflectivity and lack of texture can also impact the performance. Furthermore, transparent objects can be a challenge for some 3D camera robotics systems as well.

Regular calibration is another important factor for maintaining an accurate 3D vision system over time. Users will need to adjust the camera’s parameters to properly calibrate, including intrinsic ones like focal length and lens distortion, and extrinsic ones like position and orientation relative to the robot.

Additionally, postprocessing algorithms and adaptive exposure control can help the 3D system adapt to environmental changes. These techniques also improve the system’s overall depth sensing.

2. Real-Time Processing and Multitarget Tracking

In many applications, robots need to be able to process 3D vision data in real time. For example, to navigate autonomously, robots must be able to perceive their surroundings as quickly as possible and react to sudden changes. Low latency is, therefore, important in 3D vision-guided robotics systems, as it allows robots to react to inputs and commands with minimal delay.

Additionally, certain algorithms give robots the ability to detect objects, identify them, and track their movements over time. This kind of multitracking is achieved with techniques like Kalman filtering and particle filtering. Kalman filtering estimates the actual position and orientation of a robot. It combines noisy sensor measurements with a model of the robot’s movement to help with tracking objects. On the other hand, particle filtering estimates the state of a robot using a set of “particles”, representing possible hypotheses about the robot’s location.

Hardware is also important to consider — powerful processors and hardware accelerators like graphics processing units (GPUs) can accelerate robotic vision data processing.

3. SLAM Optimization

Simultaneous localization and mapping (SLAM) is a technique that allows robots to map their environments and estimate their locations within that map. SLAM algorithms are essentially like advanced GPS. The more advanced the SLAM technologies are, the more accurate and dynamic the map becomes.

For long-distance fleet drivers, SLAM can be a game changer, routing paths while navigating unfamiliar environments. It goes beyond conventional linear robotic movements — it makes systems more responsive, which is the reason it is often used in home smart devices like security systems.

When combined with 3D vision, SLAM can obtain depth information of each pixel directly, leading to faster initialization and more reliable tracking. By reducing reliance on complex visual algorithms, 3D input also lowers computational requirements, making real-time SLAM more feasible on edge devices like Jetson platforms. Also, with accurate geometric data, feature matching becomes more robust, reducing scale drift and improving pose estimation stability in a non-deterministic environment.

4. Object Recognition and Scene Understanding

Object recognition refers to a robot’s ability to identify and classify objects in different environments. Techniques like deep learning and convolutional neural networks (CNN) are usually involved in this, as these systems are trained on massive datasets of images to be able to recognize different objects. Robots use object recognition to help with manufacturing tasks like:

- Automated assembly: Quickly identifying and picking the right parts that need to be assembled.

- Quality control: Inspecting products for defects like dents or scratches, reducing waste and improving product quality.

- Bin picking: Locating and retrieving specific parts from a bin of random objects.

- Inventory management: Scanning and identifying parts or products to track inventory levels and accurately count stock.

In logistics, robots can find and sort packages based on where they’re going or their size. They might also help load or unload boxes and pallets from trucks, automating tasks that can be physically demanding.

5. AI for Enhanced Robotic Perception

AI, especially machine learning and neural networks, is revolutionizing robotic perception. Machine learning algorithms can be trained to help robots recognize objects or understand a scene. They also help robots learn from experience, allowing them to adapt to environmental factors over time, such as lighting or weather changes. AI robot applications include:

- Autonomous guided vehicles (AGVs): AGVs in warehouses must be able to predict whether a human will cause a collision due to unpredictable behavior. A drone on autopilot must know how to course-correct if other drones or birds get in the way of its survey.

- Cobots: Collaborative robots must understand speech, and AI algorithms can help them do exactly this. AI algorithms help robots become more adept at perceiving the nuances of language with voice recognition, improving speech accuracy and making it easier for humans and robots to interact and work together.

- Adaptive learning: Adaptive robotics takes cobots a step further, improving contextualization and learning over time. This integration improves cognitive functions and decision-making, while also making it easier for multiple robots to work together. Adaptive learning robots can also be used in education — intelligent tutoring systems, for example, create curated lessons for learners by gaining insight into a student’s needs and their unique knowledge gaps.

6. Multimodal Data Fusion

Multimodal data fusion refers to the combination of data from multiple sensors to give robots a more accurate, complete understanding of their environment. For example, combining 3D vision data with color images (RGB data) can improve object recognition and the robot’s ability to truly understand a scene.

By combining data from multiple sources, robots can overcome the limitations of individual sensors and create a more robust and accurate perception system. The fusion can impact a robotic system’s overall performance and reliability.

Embrace the Future of Robotics With Orbbec

Integrated 3D vision systems are changing the world of robotics, ushering in a new era of intelligent and autonomous machines. With greater perception capabilities, 3D vision is being used in various industries, from manufacturing and logistics to health care and transportation. Since 2013, Orbbec has been innovating in 3D vision, from sensors to AI. Streamline your development process with easy-to-integrate, high-performance robotics cameras.

Orbbec’s high-performing, customizable solutions are backed by world-class manufacturing and supply chain management. With global support and comprehensive ODM/OEM services, Orbbec is your perfect partner in 3D vision.

Fill out the contact form to consult about products or OEM services today.