When Depth Cameras Leave the Lab: What Actually Matters for Mobile Robots

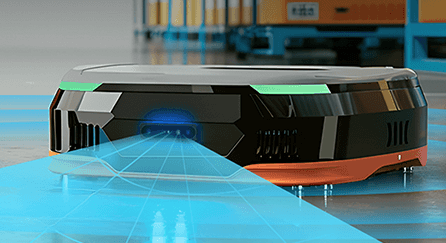

Not surprisingly, when you evaluate a product in a controlled lab setting, almost any 3D camera will look good. It’s once your prototype robot hits a warehouse floor or a hospital corridor that the true life environment becomes unpredictable. It has to survive fluctuating power, constant vibration, and optical noise that a datasheet never mentions. That’s why when we talk about depth sensing for mobile robots, the conversation quickly shifts from raw resolution to how a sensor survives the transition from a prototype to a fleet working in the real world. Our design services partner OLogic approaches vision through the lens of full-system integration, where selecting a camera is about how the sensor performs once it is part of a complete robotic platform designed for deployment.

Problem #1: Managing Light and Optical Noise

Though you might think indoor lighting is a controlled and stable environment and shouldn’t cause too many issues compared to outdoors, this is all too often not the case. In practice, internal lighting conditions are one of the most critical variables in this process because indoor environments are rarely consistent throughout a building. You might have overhead LED arrays, high-glare polished floors, and floor-to-ceiling windows that can completely wash out depth data. Sunlight streaming in from a window or open door. For a robot tasked with obstacle avoidance, these blind spots are more than just a nuisance; they are a safety risk.

One of the key things OLogic has noted about Orbbec’s stereo vision cameras is that they are particularly effective in these conditions because they maintain a high-density point cloud, even when a robot is looking directly at bright ceiling fixtures or navigating through sun-drenched hallways. Consistency across these lighting conditions, especially as they change through the day, is what actually keeps a navigation stack stable. By choosing sensors that handle such high ambient light, their engineers can ensure that depth sensing for the mobile robot remains reliable, regardless of the building’s architecture or the time of day.

Problem #2: Mechanical Packaging and Thermal Constraints

Mechanical integration is equally important because robotics is often the difficult art of fitting ten pounds of electronics into a five-pound bag. Between high-capacity batteries, drive motors, and cooling systems, the space left for vision sensors is usually cramped. A best-in-class sensor is rendered useless if its thermal footprint or physical size forces a total redesign of the robot’s chassis.

What OLogic looks for to overcome these challenges is compact options like the Orbbec Gemini series that offer flexible mounting, allowing them to position sensors effectively without introducing unnecessary mechanical complexity. Whether the camera is tucked into a bumper for toe clip detection or mounted on a high mast for human-robot interaction, it has to fit the robot’s physical envelope and provide the required field of view.

Problem #3: Reliability Through GMSL2 and Professional Interfaces

This is often not seen until it’s too late and can be very expensive to correct. The unintended consequences of poor interface reliability play a major role in production robotics. While USB 3.0 is common in development environments, it is often the weak link in a production mobile robot. Constant vibration and cable strain can lead to those ghost disconnects that are a nightmare to debug in the field. That’s why, for multi-camera systems or high-reliability applications, OLogic looks for products with automotive-proven standards like GMSL2 (Gigabit Multimedia Serial Link). Automotive serial interfaces such as GMSL2 are designed for electrically noisy environments and long cable runs, making them well suited for industrial robots where sensors may be mounted meters away from the compute unit.

Orbbec’s GMSL2-enabled cameras have helped OLogic significantly reduce this risk, addressing what had been a major headache for design firms striving to build reliable robots. By allowing sensors to be placed several meters away from the central computer, the likelihood of failure is reduced and the overall build becomes more robust. This architecture enables distributed camera placement with centralized processing, ensuring high-speed and low-latency data transmission that is immune to the electromagnetic interference found in industrial environments.

Problem #4: Reducing Integration Time with Unified Software

Hardware is only half the battle, as the software stack must be equally robust. For engineering teams building on embedded Linux or ROS 2, the integration time can be a significant bottleneck. The Orbbec SDK has allowed OLogic’s team to maintain a unified software interface across different camera models, which simplifies the transition from a prototype using one sensor technology to a production unit using another. This level of compatibility lets developers focus on higher-level application logic rather than low-level driver debugging.

Problem #5: Scaling to Multi-Camera Perception

As mobile robots become more capable, many platforms are moving beyond single-camera perception systems. Modern robots often require multiple depth sensors to achieve 360° environmental awareness, redundancy for safety-critical applications, and improved perception in cluttered environments. However, scaling from one sensor to several introduces additional architectural challenges around camera synchronization, bandwidth management, and compute resource allocation.

Conclusion

Ultimately, there is no such thing as a universally best 3D camera for robotics. The right choice always depends on the specific application, the orientation of the sensor, and the production considerations of the build.

OLogic has found that designing a scalable perception architecture early in development is the most effective way to mitigate engineering risk as a system evolves. By evaluating Orbbec’s portfolio within a full system context and leveraging tools like the Orbbec unified SDK for multi-model management and GMSL2 interfaces for distributed placement, their team ensures the electronics, software, and mechanical design work together as a cohesive platform.

Whether the challenge is managing ambient light on a hospital floor or scaling a multi-camera array across an autonomous fleet, the goal remains the same: a reliable, production-ready system that performs just as well in the real world as it did on the workbench.

Frequently Asked Questions

1. Why do depth cameras perform differently in real-world environments compared to lab settings?

Lab environments offer controlled conditions that don’t reflect real-world challenges. Production environments introduce fluctuating power, constant vibration, optical noise from variable lighting (LED arrays, reflective floors, sunlight through windows), and electromagnetic interference. These factors can cause depth data dropouts and navigation failures that never appear during controlled testing. Selecting cameras designed for environmental robustness—with features like high ambient light tolerance and automotive-grade interfaces—ensures reliable performance in deployment.

2. What camera interface is best for production mobile robots?

While USB 3.0 is common in development, it often becomes a weak link in production due to cable strain and vibration causing intermittent disconnects. For multi-camera systems or high-reliability applications, automotive-proven standards like GMSL2 (Gigabit Multimedia Serial Link) are recommended. GMSL2 is designed for electrically noisy environments and supports long cable runs of several meters, making it ideal for industrial robots where sensors may be mounted far from the compute unit.

3. How does Orbbec’s unified SDK simplify multi-camera robot development?

The Orbbec SDK provides a unified software interface across different camera models, which simplifies transitioning from prototype to production. Engineering teams building on embedded Linux or ROS 2 can maintain consistent code when switching between sensor technologies. This compatibility reduces integration time and lets developers focus on higher-level application logic rather than low-level driver debugging, accelerating the path from development to fleet deployment.

Build Production-Ready Mobile Robots With Orbbec

From prototype to fleet deployment, our 3D vision solutions are engineered for the real-world challenges of mobile robotics. Explore our portfolio or consult with our experts to find the right depth sensing solution for your application.